AI pulse last 7 days

Daily AI pulse from YouTube, blogs, Reddit, HN. Ruthlessly filtered.

Sources (41)▶

- criticalAndrej Karpathy

Były dyrektor AI w Tesli, OpenAI cofounder. Każde video to gold.

- criticalAnthropic

Oficjalny kanał Anthropic. Każdy release Claude'a.

- criticalComfyUI Blog

Release log dla integracji ComfyUI — Luma Uni-1, GPT Image 2, ACE-Step music gen, Seedance. Pokrywa video+image+music+workflow.

- criticalOpenAI Blog

Oficjalny blog OpenAI. Wszystkie release.

- criticalSimon Willison's Weblog

Najlepszy 'thinker' AI. Codzienne posty, deep insights, niska hype rate.

- highAI Explained

Głęboka analiza papers i benchmarków, niska hype rate.

- highAI Jason

Praktyczne tutoriale Claude Code, MCP, workflow vibe codingu.

- highBen's Bites

Daily AI digest, creator-friendly tone. Codex, model releases, agentic AI.

- highCole Medin

Vibe coding + agentic workflows + Claude Code MCP integrations.

- highFal AI Blog

Fal hostuje większość nowych AI image/video modeli — ich blog to wczesne sygnały premier.

- highHN: 3D & Gaussian Splatting

HN signal dla 3D generative — Gaussian Splatting, NeRF, image-to-3D. Próg 20 bo niszowa kategoria (top historic 182pts).

- highHN: AI agents / MCP

HN posty o agentach, MCP, vibe codingu z min 100 pkt.

- highHN: Claude / Anthropic

HN posty z 'Claude' lub 'Anthropic' z min 100 pkt.

- highHugging Face Blog

Releases dla image, video, audio, 3D modeli. Część tech-heavy — Gemini relevance odfiltruje noise. Downgraded z critical: za duży volume na 'must-read' status.

- highIndyDevDan

Claude Code power user, prompty, hooki.

- highInterconnects (Nathan Lambert)

AI policy + research analysis. Niska hype rate, opinionated.

- highLatent Space

Podcast + blog Swyx — wywiady z founderami i deep dives engineeringowe.

- highMatt Wolfe

Comprehensive AI tools weekly digest. ~700K subs.

- highMatthew Berman

AI news, model release reviews, agent demos. Wysoki output.

- highr/aivideo

Community AI video — Sora, Veo, Runway, Kling, LTX. Co naprawdę zaskakuje twórców.

- highr/ClaudeAI

Społeczność Claude'a — power users, tipy, problemy.

- highr/LocalLLaMA

Open-source LLMs, lokalne uruchamianie, benchmarks bez hype.

- highr/StableDiffusion

Największa community open-source image gen (700k+ users). Premiery modeli, LoRA, ComfyUI workflows.

- highRiley Brown

Vibe coding, AI builder workflows, Cursor + Claude tutorials.

- highThe Decoder

Niemiecki AI news outlet po angielsku, dobre breaking news.

- highTheo - t3.gg

TypeScript + AI dev workflows. Hot takes, narrative-driven.

- highYannic Kilcher

Paper reviews i deep dives w research AI.

- lowAI Weirdness

Janelle Shane — playful AI experiments, image gen quirks. Niski volume, unikalna perspektywa.

- mediumbycloud

AI papers digestible — między 2MP a Yannic Kilcher.

- mediumCreative Bloq

Design industry — gdzie AI ingeruje w klasyczne dyscypliny graficzne.

- mediumFireship

100-sec format, often AI/LLM + tech news.

- mediumfxguide

VFX i film industry — coraz więcej AI w pipeline. Profesjonalna perspektywa.

- mediumGreg Isenberg

Solo founder vibe — buduje produkty z AI, podcasty z indie hackers.

- mediumr/ChatGPTCoding

Vibe coding tipy, IDE setupy, prompty. Mix wszystkich modeli.

- mediumr/comfyui

ComfyUI workflows — custom nodes, JSON workflows, optymalizacje.

- mediumr/midjourney

Midjourney community — premiery v7+, style references, prompt patterns.

- mediumr/runwayml

Runway-specific community — premiery features, prompt patterns, comparisons z konkurencją.

- mediumr/SunoAI

Suno music gen community — nowe wersje modelu, lyric prompting techniques. Audio AI ma slaby RSS ecosystem.

- mediumTina Huang

AI workflows for data science, practical applications.

- mediumTwo Minute Papers

Krótkie streszczenia papers AI, świetne dla szybkiego scan'a.

- mediumWes Roth

AI news z bardziej clickbaitowym tonem — filtr Gemini odsiewa hype.

[HIPHOP] Late Night Hustle | Dark Emotional Hip Hop / Trap Song

See how SunoAI can generate mood-specific hip-hop/trap instrumentals, perfect for creative projects or background music, even if you're not a musician.

User u/Guitardep shared "Late Night Hustle," an AI-generated dark emotional hip-hop/trap song created with SunoAI. The track, posted on r/SunoAI, is described as an instrumental blend of modern trap drums, fast hi-hats, deep bass, and atmospheric textures, designed for late-night creative sessions. This piece exemplifies SunoAI's growing capability to produce genre-specific and mood-driven music, offering non-musicians a powerful tool for generating background tracks or inspiration. It showcases the practical application of AI in crafting complex, atmospheric soundscapes for various creative needs.

r/SunoAI·creative_work·05/07/2026, 01:30 PM·/u/GuitardepOpen-sourcing Banodoco Hivemind: 1M+ Discord messages from artists and engineers working deeply with open image/video models, packaged as an agent skill

A massive dataset of real-world discussions from artists and engineers using open image/video AI models is now available, offering a unique resource for building smarter creative…

The Banodoco Hivemind, a substantial dataset comprising over 1 million Discord messages from artists and engineers, has been open-sourced. This collection captures deep, practical discussions around open image and video AI models, offering insights into real-world usage, problem-solving, and creative applications. Packaged as an "agent skill," this resource is designed to enhance the capabilities of AI agents, allowing them to better understand and assist users in creative workflows. It provides a novel foundation for developing more context-aware and helpful AI assistants, moving beyond generic training data to specialized, community-driven knowledge.

r/comfyui·tooling·05/07/2026, 01:30 PM·/u/PetersOdyssey

Open-sourcing Banodoco Hivemind: 1M+ Discord messages from artists and engineers working deeply with open image/video models, packaged as an agent skill

This open-sourced database of over a million Discord messages offers practical insights and best practices for open image/video models, directly queryable by AI agents or users.

Banodoco Hivemind has been open-sourced, providing access to over a million Discord messages collected over three years from artists and engineers deeply engaged with open image and video models. This valuable dataset, previously locked within Discord, is now available as an "agent skill," allowing AI agents or individual users to query it for best practices, comparisons, and specific settings related to various models like Wan Animate or LTX. The creator, /u/PetersOdyssey, emphasizes its utility for surfacing previously siloed knowledge and plans for live updates and eventual public web search indexing. This release offers a unique resource for understanding real-world application and troubleshooting of open-source creative AI tools.

r/StableDiffusion·tooling·05/07/2026, 01:30 PM·/u/PetersOdyssey

[Bollywood pizza] LE ROSE FIORIRANNO PER NOI

This Reddit post features a unique AI-generated music track from Suno AI, blending "Bollywood pizza" themes, offering a quick listen to the platform's creative capabilities.

A Reddit user, u/Fun_Operation8440, recently shared an AI-generated music track titled "LE ROSE FIORIRANNO PER NOI" on the r/SunoAI subreddit. The piece is notably described with the intriguing tag "[Bollywood pizza]", indicating a fusion of genres or styles. This creation serves as a practical demonstration of Suno AI's capabilities in generating diverse and creatively themed musical compositions. While not a new model release or technical breakthrough, it highlights the platform's potential for hobbyists and creative non-developers to produce unique audio content. The track itself is linked to a YouTube video, allowing listeners to experience this specific AI-driven artistic output.

r/SunoAI·creative_work·05/07/2026, 01:18 PM·/u/Fun_Operation8440

SenseNova U1 Interleaved Output: From Single Prompt to Consistent Visual Set

Discover SenseNova U1's new Interleaved function to generate consistent, structured content that seamlessly blends text and images from a single prompt, perfect for tutorials or c…

SenseNova's U1 Fast model has introduced an "Interleaved" output function, allowing users to generate continuous, structured content that combines text and images from a single complex prompt. Unlike traditional single-image generators, this feature aims to process intricate instructions, such as creating a sourdough bread tutorial, by weaving together visual and textual elements logically. The user, /u/ReonNYK, highlights its potential for maintaining stylistic consistency and narrative coherence across multiple outputs, suggesting it could be superior for content creation like comic strips. This represents a significant advancement in multi-modal AI generation, moving beyond isolated images to more integrated storytelling.

r/StableDiffusion·tooling·05/07/2026, 01:17 PM·/u/ReonNYK

[edm/metal/industrial] Copyright This Shit

Explore a user-shared example of Suno AI's impressive ability to generate complex, multi-genre music (EDM, metal, industrial), sparking thoughts on AI-generated content copyright.

Reddit user /u/moonysugar showcased an AI-generated music track on r/SunoAI, blending EDM, metal, and industrial genres. Titled "Copyright This Shit," the post highlights the user's confidence in the piece's quality, suggesting it rivals human-created works. This submission serves as a practical demonstration of Suno AI's advanced capabilities in producing complex, multi-genre musical compositions. It implicitly raises important questions about the copyright status and intellectual property rights of creative content generated by artificial intelligence. The post illustrates how AI tools empower hobbyists to create sophisticated audio, blurring lines between human and machine artistry.

r/SunoAI·creative_work·05/07/2026, 01:10 PM·/u/moonysugar

Elon doubled limits

Free ChatGPT users gain a much more capable GPT-5.5 Instant model and spreadsheet integration, while paid Claude users can now utilize twice as much capacity and leverage new agen…

OpenAI has rolled out GPT-5.5 Instant to all free ChatGPT users, offering substantial improvements in vision, PDF comprehension, web search, and memory, alongside a 52.5% reduction in hallucinations compared to its predecessor. Additionally, ChatGPT now directly integrates with Excel and Google Sheets, enabling users to build sheets, analyze data, and generate formulas within spreadsheets. Anthropic has also significantly boosted its offerings, doubling the usage limits for all paid Claude plans by leveraging SpaceX's Colossus 1 data center. Furthermore, Claude Managed Agents received new capabilities like "Dreaming" for memory, "Outcomes" for success grading, and "Multi-agent orchestration." These developments collectively enhance accessibility and power for both free and paid AI users,…

Ben's Bites·news·05/07/2026, 01:03 PM

AI models follow their values better when they first learn why those values matter

A new Anthropic study shows that teaching AI models *why* certain values matter, before teaching specific behaviors, makes them significantly better at following those values in a…

A study from the Anthropic Fellows Program reveals a significant advancement in aligning large language models (LLMs) with intended values. Researchers discovered that training an LLM on texts explicitly explaining its desired values *before* teaching it specific behaviors leads to substantially better adherence to those principles. This "values-first" approach enables models to maintain their ethical guidelines more effectively, even when encountering novel situations not present in their initial training data. This method represents a crucial step in AI safety, moving beyond simple behavioral examples to instill a deeper understanding of underlying values, potentially leading to more robust and trustworthy AI systems.

The Decoder·news·05/07/2026, 12:45 PM·Maximilian Schreiner

Roblox Scientoloty Speedrun made with SuperGrok

See a humorous AI-generated video "speedrun" in a Roblox style, showcasing the creative capabilities of the SuperGrok tool for generating unique content.

A Reddit user, /u/ginadaspokemon, shared a unique AI-generated video titled "Roblox Scientoloty Speedrun" created with a tool called SuperGrok. This creative work showcases the potential of AI video generation to produce highly specific and humorous content. The video adopts a distinct Roblox-like aesthetic, demonstrating SuperGrok's capability to generate stylized narratives. It provides a concrete example of how AI tools can be leveraged by hobbyists and creative non-developers to create engaging and niche video content, moving beyond generic outputs. This highlights the evolving landscape of AI-powered creative expression in video.

r/aivideo·creative_work·05/07/2026, 12:36 PM·/u/ginadaspokemon

Moodboard 6 - Digital landscape

See a stunning "digital landscape" image created with Midjourney, complete with the exact parameters used for inspiration and experimentation.

Reddit user /u/Heath_co shared a captivating "Moodboard 6 - Digital landscape" image, showcasing the creative capabilities of Midjourney. The post includes the specific parameters used: --profile e762978 --v 8.1 --stylize 1000 --hd. This example highlights how precise parameter tuning can achieve distinct aesthetic results, particularly with a high stylize value and the --hd flag for enhanced detail. While not a new feature release, it provides a concrete instance of artistic expression and technical application for those exploring AI image generation. It serves as valuable inspiration for hobbyists and creative non-developers looking to replicate or adapt similar styles.

r/midjourney·creative_work·05/07/2026, 12:17 PM·/u/Heath_coHelp with Duet Voice Assignment in V5.5 (male/female alternating)

If you're using Suno AI v5.5 for duets, be aware that precise line-by-line voice assignment for multiple characters might be unreliable, often misassigning vocals despite detailed…

A user on Reddit is seeking help with inconsistent voice assignment in Suno AI v5.5, specifically when attempting to create duet or multi-character songs with alternating male and female vocals. Despite employing various prompting techniques, including explicit [Female Voice][Character] tags, style prompts like "vocals alternating male baritone and female soprano," and single-letter tags, the AI frequently misassigns lines, ignoring the specified character voices about 50% of the time. This issue highlights a current challenge in achieving precise vocal control within Suno AI, indicating that reliable line-by-line duet assignment remains an elusive feature for users. The problem persists even with the latest version, affecting complex musical compositions like music hall patter songs.

r/SunoAI·tooling·05/07/2026, 12:16 PM·/u/AloneTradition5725

[Melodic Metal + Chiptune] Mega Man X7 CODE CRUSH by Game HUB Metal Covers

Explore how SunoAI can be used to create sophisticated, genre-blending music covers, like this impressive melodic metal and chiptune rendition of a Mega Man X7 track.

A Reddit user on r/SunoAI shared a fan-made musical cover titled "[Melodic Metal + Chiptune] Mega Man X7 CODE CRUSH by Game HUB Metal Covers." This creative piece demonstrates the advanced capabilities of AI music generation tools like SunoAI to blend distinct genres, specifically melodic metal and chiptune, into a cohesive and engaging track. It showcases how hobbyists and creative non-developers can leverage AI to produce complex, stylized music covers, offering inspiration for personalized content creation. The piece highlights SunoAI's potential for generating specific stylistic elements and intricate arrangements from user prompts.

r/SunoAI·creative_work·05/07/2026, 12:07 PM·/u/Necessary_Olive_3027

Made this with Nano + Kling 3

See a user-generated AI video created with Nano and Kling 3 to get a sense of current creative capabilities and tool combinations in AI video generation.

A Reddit user, /u/Entire-Turnover-8560, posted an AI-generated video created using a combination of tools identified as "Nano" and "Kling 3". This submission on r/aivideo serves as a practical demonstration of current AI video generation capabilities, particularly for creative hobbyists interested in the output quality and stylistic potential of these models. While specific details about "Nano" are not provided, "Kling 3" likely refers to Kuaishou's advanced video generation model, known for its high-fidelity outputs. The post highlights how these tools can be combined to produce compelling visual content, offering inspiration for those exploring AI in creative workflows.

r/aivideo·creative_work·05/07/2026, 11:25 AM·/u/Entire-Turnover-8560

feat: Add Mimo v2.5 model support by AesSedai · Pull Request #22493 · ggml-org/llama.cpp

A new, powerful multimodal AI model, Mimo v2.5, with a massive 1M token context window and MoE architecture, is now supported by `llama.cpp`, making it accessible for local experi…

The popular `llama.cpp` project, known for enabling local inference of large language models, has officially added support for the new Mimo v2.5 model through a recent pull request. This significant update allows hobbyists and creative non-developers to run a highly advanced, multimodal Mixture of Experts (MoE) model on their consumer hardware. Mimo v2.5 features a sparse MoE architecture with 310B total parameters (15B activated), an exceptional 1M token context length, and comprehensive multimodal capabilities spanning text, image, video, and audio, supported by dedicated 729M-param vision and 261M-param audio encoders. This integration democratizes access to cutting-edge AI, making powerful local experimentation more feasible.

r/LocalLLaMA·model_release·05/07/2026, 11:23 AM·/u/jacek2023

The Acorn Throne (2026) lol

Check out this short, speculative AI-generated video titled 'The Acorn Throne (2026)' for a glimpse into creative AI applications.

A Reddit user, /u/Helpmefixit1234, posted a link to an AI-generated video titled "The Acorn Throne (2026)" on the r/aivideo subreddit. This submission highlights a creative application of AI in video generation, offering a speculative or humorous glimpse into potential future content. While specific details about the AI models or techniques used are not provided in the post, it serves as an example of how individuals are leveraging AI for artistic expression and conceptual storytelling. The "2026" in the title suggests a fictional or forward-looking narrative, adding an intriguing layer to the creative piece.

r/aivideo·creative_work·05/07/2026, 11:22 AM·/u/Helpmefixit1234

Google Deepmind takes a stake in EVE Online studio to test AI models

Google Deepmind is using EVE Online's complex social and economic systems as a massive sandbox to train and test advanced AI agents in human-like environments.

Google Deepmind has acquired a minority stake in CCP Games, the developer of the space MMO EVE Online, to use the virtual world as a testing ground for advanced AI models. Unlike previous Deepmind milestones in Go or StarCraft II, EVE Online provides a persistent, player-driven economy and complex social hierarchy that requires long-term strategic planning. This partnership suggests a shift toward training AI agents capable of navigating intricate human-like systems, markets, and social dynamics. The move could eventually lead to more sophisticated autonomous agents or NPCs within the game's ecosystem. It marks a significant step in using massive multiplayer environments for reinforcement learning at scale.

The Decoder·news·05/07/2026, 11:15 AM·Maximilian Schreiner

Claude's new "Dreaming" feature is designed to let AI agents learn from their mistakes

Claude agents can now "dream" by reviewing past sessions to clean up memory and distill new insights asynchronously, improving performance over time.

Anthropic has introduced a "Dreaming" feature for Claude Managed Agents, enabling them to refine their performance through asynchronous reflection. This process involves reviewing previous agent sessions to identify errors, remove redundant or outdated memory entries, and extract actionable insights for future tasks. Alongside this, Anthropic launched "Outcomes" and "Multiagent Orchestration" into public beta, focusing on goal-oriented evaluation and complex task delegation. Unlike standard memory, Dreaming allows agents to consolidate knowledge without manual intervention, effectively creating a self-improving loop. This update addresses the common issue of memory bloat and context degradation in long-running AI workflows.

The Decoder·tooling·05/07/2026, 10:59 AM·Matthias Bastian

DeepSeek nears $45bn valuation as China’s ‘Big Fund’ leads investment talks

DeepSeek is securing $45B in funding, ensuring they remain a dominant force in the open-weights LLM space for the foreseeable future.

DeepSeek, the developer of the highly efficient V3 and R1 models, is reportedly in talks for its first major investment round that could value the company at $45 billion. The funding is expected to be led by China’s National Integrated Circuit Industry Investment Fund, known as the 'Big Fund.' This move marks a significant shift as DeepSeek, previously funded by high-frequency trading firm High-Flyer Quant, seeks massive capital to scale its compute resources. The valuation would place DeepSeek among the world's most valuable AI startups, rivaling US-based giants like Anthropic. For the local LLM community, this suggests a long-term commitment to developing state-of-the-art models that often challenge proprietary alternatives.

r/LocalLLaMA·news·05/07/2026, 10:21 AM·/u/Nunki08Running Qwen3.5 / Qwen3.6 with NextN MTP (Multi-Token Prediction) speculative decode in llama.cpp — single RTX 3090 Ti GPU guide

Speed up Qwen 3.5/3.6 models by nearly 3x on a single GPU using NextN Multi-Token Prediction in llama.cpp with this specific build and quantization guide.

This technical guide details how to implement NextN Multi-Token Prediction (MTP) for the Qwen 3.5 and 3.6 model families using llama.cpp. By leveraging MTP, users can achieve approximately 2.9x faster decoding speeds with zero loss in output quality, as the prediction heads are natively integrated into these models. The process currently requires building llama.cpp from specific pull requests (#22400 and #22673) or using a provided fork. A critical step involves a specific quantization override (--tensor-type nextn=q8_0) to prevent output corruption. Benchmarks show the 35B MoE variant reaching an impressive ~150 tokens per second on a single RTX 3090 Ti.

r/LocalLLaMA·tutorial·05/07/2026, 09:56 AM·/u/yes_i_tried_google

I built a tool to mix two artists on one image with region masks — Van Gogh + Picasso, no training, arbitrary refs

Mix different artistic styles in specific parts of an image using masks and IP-Adapters without any training or fine-tuning.

A new open-source tool allows users to apply distinct artistic styles to specific regions of an image using spatial masks. Built on Stable Diffusion 1.5, the system utilizes ControlNet (Canny and Tile) for structural integrity and two IP-Adapters for style injection. The technical core involves spatial routing, where each adapter's contribution is masked within the cross-attention layers to prevent 'muddy' averaging of styles. It offers three modes: global mixing, painterly emphasis, and region-specific stylization. While effective, the author notes that aggressive style weights can distort realistic faces and small color details. The project includes a GitHub repository with a Colab notebook and a Hugging Face Space for testing.

r/StableDiffusion·tooling·05/07/2026, 09:24 AM·/u/Longjumping_Gur_937

Prompt share: heroine crash landing into mech transformation with a mechanical tiger

Learn how to prompt complex cinematic sequences involving crash landings and mechanical transformations in AI video tools.

This post on r/aivideo showcases a high-quality cinematic sequence generated using AI video tools, specifically focusing on a heroine's crash landing and subsequent transformation into a mechanical tiger. The author provides the exact prompt used, which is valuable for creators trying to master complex motion and object consistency. The video demonstrates significant progress in handling multi-stage actions within a single generation or sequence. By sharing the prompt, the creator offers a template for others to experiment with physics-heavy scenes and sci-fi transformations. This type of community sharing helps bridge the gap between simple text-to-video and professional-grade AI cinematography.

r/aivideo·creative_work·05/07/2026, 09:20 AM·/u/Accomplished-Tax1050Is anyone actually getting good results with Flux2.DEV?

If you're struggling to get sharp, realistic images from Flux2.DEV, you're not alone; a user reports consistent issues with hazy outputs and a limited LoRA ecosystem, seeking comm…

A Reddit user on r/StableDiffusion, /u/Extension-Yard1918, has reported persistent issues achieving sharp, realistic images with the Flux2.DEV model over several months of testing. Despite efforts like increasing resolution and step count, and experimenting with different samplers and settings, the generated outputs consistently appear hazy, soft, or foggy, failing to match the quality of models like Z-Image Turbo. The user also notes a weak image editing feature and a nearly nonexistent LoRA ecosystem, questioning if the problem lies with the model's training data, VAE, scheduler, or their own workflow. They are seeking practical advice and specific settings from the community to unlock Flux2.DEV's potential.

r/StableDiffusion·opinion·05/07/2026, 09:15 AM·/u/Extension-Yard1918

How Unreal Engine 5 indie game Beastro uses 'paper puppets' to reinvent RPG art

Learn how an indie studio uses Unreal Engine 5 to blend 2D 'paper puppet' aesthetics with 3D environments for a unique RPG look.

Beastro is an upcoming indie RPG that stands out by using a 'paper puppet' art style within Unreal Engine 5. Art director Kate Rado explains that the game's visuals are inspired by puppet theater and tactile, physical objects like food. Instead of traditional 3D modeling for characters, the team uses flat, illustrated assets that move like puppets, creating a charmingly weird atmosphere. This approach allows a small team to achieve a high-fidelity look without the overhead of complex 3D character animation. The project demonstrates how modern engines can be leveraged for non-photorealistic, highly stylized creative directions.

Creative Bloq·creative_work·05/07/2026, 09:00 AM· Alan Wen

So Far This is My Favorite Use-Case for LTX 2.3/ComfyUI

Discover a practical workflow for using the LTX 2.3 video model in ComfyUI to achieve high-quality, consistent video generation on local hardware.

The Reddit community is exploring the capabilities of LTX 2.3, a new video generation model, specifically within the ComfyUI node-based interface. This post demonstrates a high-quality use-case that highlights the model's strengths in temporal consistency and motion fidelity. LTX 2.3 is designed to be more accessible for local execution on consumer GPUs than previous state-of-the-art video models. The author's workflow provides a practical example of how to integrate this model into complex creative pipelines. This demonstration is particularly valuable for creators looking for alternatives to closed-source video tools like Runway or Luma.

r/StableDiffusion·tooling·05/07/2026, 08:33 AM·/u/optimisoprimeothe man next door

A high-quality example of AI-generated narrative horror, showcasing current capabilities in character consistency and atmospheric storytelling.

The Man Next Door is a short AI-generated video shared on the r/aivideo subreddit, focusing on a suspenseful, uncanny valley narrative. The piece demonstrates significant progress in maintaining character consistency and environmental details across multiple shots, a common challenge in AI cinematography. It utilizes a dark, cinematic aesthetic to evoke a sense of dread, highlighting how creators are moving beyond simple prompt-to-video clips toward structured storytelling. The creator likely employed high-end tools like Runway Gen-3 or Luma Dream Machine, given the fluid motion and lighting quality. This work serves as a benchmark for hobbyists looking to blend AI visuals with traditional suspense tropes.

r/aivideo·creative_work·05/07/2026, 08:18 AM·/u/Parallelkarma

testing LTX 2.3 1.1 distilled on my gpu. pretty much decent for creating ugc content or short tiktok vlog.

Distilled LTX 2.3 enables fast, high-quality local video generation on mid-range GPUs like the RTX 4060 Ti when paired with the latest CUDA/Torch updates.

A user on r/comfyui demonstrates the performance of the distilled LTX 2.3 1.1 model for generating short-form video content locally. The test highlights significant performance gains when using updated software stacks, specifically Torch 2.11.0 and CUDA 13.0. Running on consumer-grade hardware (RTX 4060 Ti 16GB), the model is capable of producing decent quality UGC and TikTok-style vlogs. The post includes a link to the specific ComfyUI workflow used for these results. This release represents a step forward in making high-quality video generation accessible on mid-range local GPUs.

r/comfyui·tooling·05/07/2026, 08:10 AM·/u/aziib

testing LTX 2.3 v1.1 distilled on my gpu. pretty decent for creating ugc content or short tiktok vlog.

LTX 2.3 v1.1 distilled runs efficiently on mid-range consumer GPUs (RTX 4060 Ti) for short video content when using updated Torch and CUDA drivers.

A user report demonstrates the performance of LTX 2.3 v1.1 distilled for creating short-form video content like TikTok vlogs. Running on an RTX 4060 Ti 16GB, the model shows significant speed improvements when paired with PyTorch 2.11.0 and CUDA 13.0 in ComfyUI. The distilled version of the model is specifically optimized for faster inference while maintaining enough quality for social media use cases. The post highlights the importance of driver and library updates for maximizing performance on consumer-grade hardware, making high-quality video generation more accessible.

r/StableDiffusion·tooling·05/07/2026, 08:10 AM·/u/aziibwhy llama.cpp can’t combine speculative decode methods?

Users are seeking to combine MTP and ngram speculative decoding in llama.cpp to maximize speed in coding tasks, but current implementation limits them to one method.

A technical discussion on r/LocalLLaMA highlights a current limitation in llama.cpp regarding speculative decoding methods. A user testing Qwen 3.6 27B with Multi-Token Prediction (MTP) found that while MTP is effective, combining it with ngram speculation would be ideal for agentic coding. Ngram is particularly fast at predicting repeated code blocks, which occurs frequently during file edits. Currently, llama.cpp only supports one speculative method at a time via command-line arguments. The community is exploring whether this is a fundamental architectural constraint or a temporary implementation hurdle that could be resolved to further boost local inference speeds.

r/LocalLLaMA·tooling·05/07/2026, 07:53 AM·/u/Qwoctopussythe part of using claude code nobody talks about

AI tools like Claude Code offer 'rented understanding': you ship fast but lose the deep knowledge required to maintain the code later.

The author reflects on the hidden cost of using Claude Code: the erosion of deep code ownership. While features are shipped in record time, the lack of cognitive resistance during the writing process means the developer doesn't truly internalize how the code works. This leads to a 'rented understanding' that evaporates shortly after the task is finished, making future debugging or refactoring difficult. The post warns that while demos focus on the speed of the 'green diff,' they ignore the long-term mental debt of living in a codebase you didn't mentally construct. Ultimately, the developer feels like a tenant in a house they didn't build, where someone else chose the wallpaper.

r/ClaudeAI·opinion·05/07/2026, 07:17 AM·/u/Consistent-Arm-875Creative Mission day #7: Festival Sunset Moment [Progressive house]

Learn how to craft sophisticated Progressive House tracks in Suno using specific musical terminology and systematic prompt variations.

This 'Creative Mission' post provides a comprehensive template for generating Progressive House music using Suno AI. It focuses on the 'festival sunset' vibe, utilizing technical terms like sidechain compression, Juno-style pads, and 909 hi-hats to guide the model effectively. The author includes three prompt variations to demonstrate how swapping a single element, such as an acoustic piano for an Oberheim synth, or removing vocals entirely, changes the emotional impact. Beyond prompts, it offers historical context on the genre and reference tracks like Eric Prydz’s 'Opus' for benchmarking. This is a high-quality example of how to move beyond simple prompts toward intentional sound design in AI music.

r/SunoAI·tutorial·05/07/2026, 07:00 AM·/u/Grenar

Claude's New "Infinite" Context Window Model, Doubled Rate Limits, Multi-Agent Cordination, & More!

Anthropic doubles Claude's rate limits and previews 'infinite' context windows alongside multi-agent orchestration for autonomous coding.

Anthropic’s 'Code with Claude' developer conference signaled a shift from chatbots to fully autonomous software engineering systems. The company announced a doubling of rate limits for all paid plans, supported by a massive new compute partnership with SpaceX involving 220,000 GPUs. New capabilities include multi-agent orchestration, where a lead agent delegates tasks to specialized sub-agents, and a 'dreaming' feature for iterative self-improvement based on past sessions. Looking ahead, Anthropic teased next-gen models featuring 'infinite' context windows and enhanced 'code taste' focused on maintainability. These updates aim to transform Claude into a persistent, long-horizon reasoning workforce for complex dev workflows.

AI Jason·news·05/07/2026, 06:44 AM·WorldofAI▶Watch here

Xyren New Cyberpunk action MV - "Ray Crash", a fusion of kpop and action film

A high-quality example of AI-driven music video production blending K-pop aesthetics with cyberpunk action, showcasing advanced character consistency.

This AI-generated music video titled 'Ray Crash' by Xyren showcases a sophisticated blend of K-pop visual styles and cyberpunk action sequences. The project demonstrates the current capabilities of AI video tools in maintaining character consistency and complex motion across multiple scenes. It serves as a benchmark for creators looking to fuse music and narrative action without traditional film crews. The visual fidelity suggests the use of advanced generative models, highlighting the shift toward AI-native entertainment and high-end digital production.

r/aivideo·creative_work·05/07/2026, 06:16 AM·/u/BlackPuppeteer

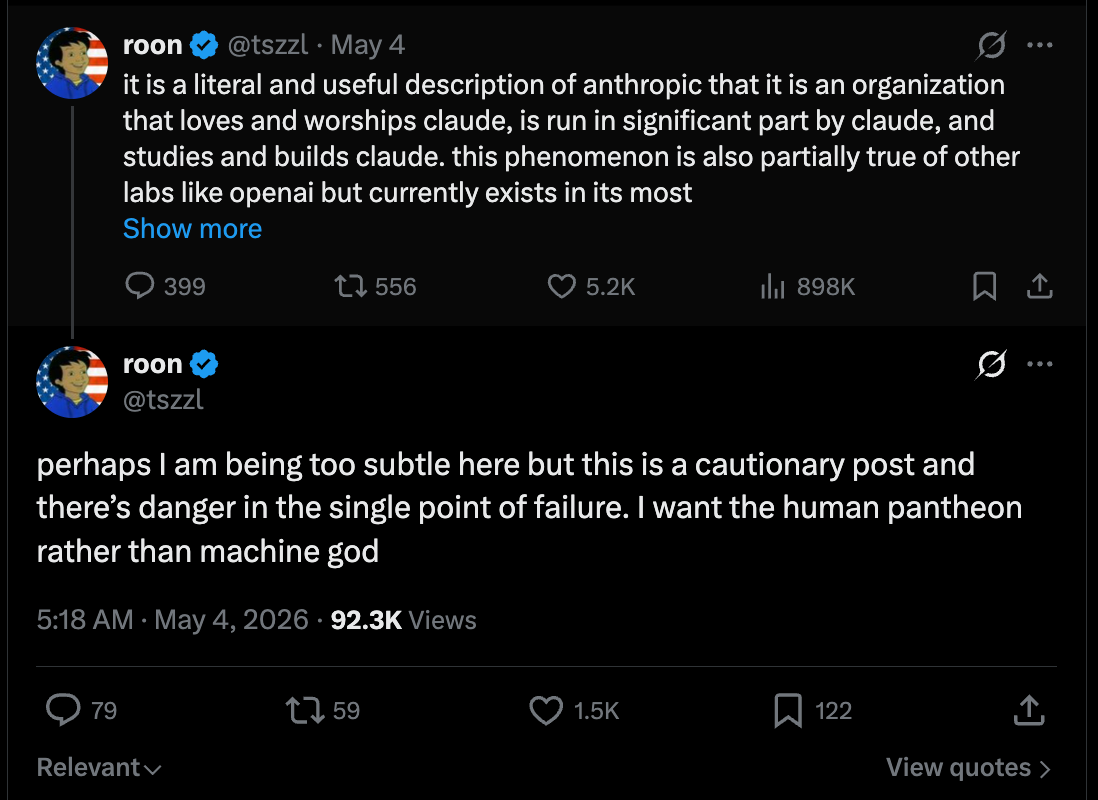

[AINews] Anthropic-SpaceXai's 300MW/$5B/yr deal for Colossus I, ARR growth is 8000% annualized

Anthropic scales up with a $5B compute deal using xAI's Colossus 1 and reports 80x revenue growth, alongside new features for Claude Managed Agents.

Anthropic's second annual developer event focused on massive infrastructure and business growth rather than a new model release. The headline is a strategic partnership with xAI, where Anthropic will utilize the Colossus 1 supercomputer in a deal valued at approximately $5 billion per year. This positions xAI as a "neocloud" provider for its competitors. Anthropic also reported a staggering 8000% annualized ARR growth and introduced three new features for Claude Managed Agents. While some expected a "Claude 4" announcement, the event served as a celebration of recent shipping velocity and a signal of the company's aggressive scaling trajectory.

Latent Space·news·05/07/2026, 05:57 AMBurned through my Claude limits in a weekend with Claude Design. Here's what I'd do differently

Optimize your Claude Design workflow by locking briefs in chat first and using visual references to save tokens and improve output quality.

A user shares seven practical lessons for mastering Claude Design while managing strict usage limits. The core advice is to finalize the creative brief and copy in standard Claude chat before moving to the design interface to save tokens. Key technical tips include setting up a design system (colors, fonts) immediately and using screenshots instead of descriptive adjectives to guide the AI. For developers, linking specific subdirectories rather than entire repositories prevents context lag and wastes less context window. Finally, the author emphasizes using built-in UI sliders for minor adjustments instead of wasting prompts on simple layout changes.

r/ClaudeAI·tutorial·05/07/2026, 05:12 AM·/u/Intelligent-Lynx-953

Google just turned this MMO into an AGI experiment

Robotics companies are using human teleoperation to bypass the data bottleneck, while Google explores MMOs like Eve Online to train AGI in complex social environments.

Wes Roth and Dylan Curious discuss the accelerating pace of humanoid robotics, highlighting Figure's achievement of producing one robot per hour. They explore 1X Technologies' strategy of using human teleoperators to perform household tasks, which serves as a data collection method to train autonomous models. The conversation also touches on Google DeepMind's interest in Eve Online as a training ground for AGI, given the game's complex social and economic systems. The hosts introduce the concept of "robotic slop"—low-level, repetitive household chores that robots could handle before achieving full manual dexterity. This "flywheel effect" suggests that early data collection through teleoperation will lead to exponential improvements in robotic autonomy.

Wes Roth·news·05/07/2026, 03:41 AM·Wes Roth▶Watch here

My Claude dreams at night and remembers everything. Better than mempalace.

A new open-source MCP server that gives Claude persistent long-term memory across sessions using local embeddings and background consolidation.

Developer /u/Mental-Spray-5263 has released iai-mcp, an open-source local daemon designed to provide Claude with persistent long-term memory across different sessions. The tool captures conversations and organizes them into three memory tiers, automatically feeding relevant context back into new chats without manual copy-pasting. It utilizes local neural embeddings for retrieval and AES-256 encryption for security, ensuring data stays private. A standout feature is background consolidation, where the system optimizes and links memories while the machine is idle. Performance benchmarks show over 99% verbatim recall and retrieval times under 100ms, with a session-start overhead of approximately 3,000 tokens.

r/ClaudeAI·tooling·05/07/2026, 03:08 AM·/u/Mental-Spray-5263THE BELL — Psychological WWII Horror Teaser 2

A high-quality teaser for an AI-generated WWII horror film demonstrating the cinematic potential of Runway's video generation tools.

This teaser, titled "THE BELL," showcases a psychological horror story set during World War II, created using Runway's generative video tools. The project highlights the increasing capability of AI to maintain consistent atmosphere, character design, and lighting across multiple shots. Unlike early AI videos characterized by heavy morphing, this piece demonstrates improved temporal stability and a deliberate cinematic aesthetic. It serves as a benchmark for independent creators looking to produce high-fidelity narrative content without traditional film budgets. The creator, Pinballerz, focuses on mood and historical texture to elevate the AI-generated imagery.

r/runwayml·creative_work·05/07/2026, 03:06 AM·/u/PinballerzQwen3.6 27B uncensored heretic v2 Native MTP Preserved is Out Now With KLD 0.0021, 6/100 Refusals and the Full 15 MTPs Preserved and Retained, Available in Safetensors, GGUFs and NVFP4s formats.

A high-performance, uncensored 27B model that successfully retains advanced Multi-Token Prediction (MTP) features for better local inference.

LLMFan46 has released 'heretic v2', an uncensored fine-tune of the Qwen3.6 27B model. This release is notable for preserving all 15 native Multi-Token Prediction (MTP) modules, which are frequently lost or degraded during the fine-tuning process. The model achieves a very low Kullback–Leibler divergence (KLD) of 0.0021, suggesting it maintains the original model's reasoning capabilities while eliminating refusals. With a refusal rate of only 6%, it is optimized for unrestricted local use. The model is available in multiple formats including Safetensors, GGUF, and NVFP4 to support various hardware setups.

r/LocalLLaMA·model_release·05/07/2026, 02:59 AM·/u/LLMFan46

ClaudePlaysPokemon Opus 4.7 run ongoing!

Watch Claude Opus 4.7 tackle Pokemon Red in real-time, demonstrating a massive leap in agentic efficiency and spatial reasoning compared to previous versions.

ClaudePlaysPokemon is a live benchmark project by an Anthropic employee where the latest Claude models play Pokemon Red without human help. The current run features the new Opus 4.7, which is showing a significant performance leap, reaching 5 badges in just 15,779 steps—three times faster than Opus 4.5. The model uses vision to navigate, maintaining its own notes and using spatial logic to solve mazes. Unlike competitors like GPT-5 or Gemini, this setup uses a lean harness with minimal tools, making it a purer test of raw model cognition. Viewers can watch the live reasoning trace to see how the LLM verifies wall coordinates and plans its next moves.

r/ClaudeAI·creative_work·05/07/2026, 02:54 AM·/u/mobcat_40Most lyric videos show lyrics too early — so I tested different pacing styles

Improve the emotional impact of your AI music videos by delaying lyric display to prevent viewers from reading ahead of the vocals.

A creator on r/SunoAI shared findings from testing different lyric pacing styles for AI-generated music videos. They observed that displaying lyrics too early causes viewers to read ahead, which diminishes the emotional impact of the vocals. The experiment compared four styles: early display, word-by-word, delayed cinematic, and karaoke-style sync. The delayed cinematic approach proved most effective, as it forces the audience to listen to the performance rather than just reading text. This subtle timing adjustment can make AI music videos feel more professional and immersive by prioritizing the auditory experience.

r/SunoAI·creative_work·05/07/2026, 02:33 AM·/u/Unlikely_Hyena1345

THE BELL — Psychological WWII Horror Teaser 2

A high-quality example of using AI video tools to create a cohesive, atmospheric psychological horror teaser set in WWII.

This post showcases the second teaser for THE BELL, a psychological horror project set during World War II, created using AI video generation tools. The video demonstrates significant progress in maintaining visual consistency and atmospheric storytelling within the AI video medium. It features eerie, photorealistic imagery of soldiers and supernatural elements, highlighting the potential for independent creators to produce cinematic-quality trailers. The project reflects the growing trend of AI cinema, where creators leverage generative models to bypass traditional production costs. While the specific tools used aren't listed in the snippet, the quality suggests advanced platforms like Kling, Runway Gen-3, or Luma Dream Machine.

r/aivideo·creative_work·05/07/2026, 02:29 AM·/u/Pinballerz

ParoQuant: Pairwise Rotation Quantization for Efficient Reasoning LLM Inference

ParoQuant is a new quantization method that preserves the reasoning and logic capabilities of LLMs at low bitrates better than standard techniques.

ParoQuant introduces Pairwise Rotation Quantization, a novel technique designed to minimize information loss during the compression of reasoning-heavy LLMs. Unlike standard quantization methods that often degrade complex logic chains, ParoQuant uses a pairwise approach to handle outlier weights more effectively. The release includes a dedicated GitHub repository and pre-quantized models on HuggingFace for immediate testing. This is particularly significant for users running large reasoning models on consumer hardware where VRAM is limited. Initial benchmarks suggest superior performance in maintaining Chain of Thought (CoT) coherence compared to traditional 4-bit methods.

r/LocalLLaMA·tooling·05/07/2026, 02:07 AM·/u/Total-Resort-3120

Never got good results from Klein? Me neither, til now

Stop using turbo LoRAs with Klein 9B; it achieves peak quality and speed with just 4 steps natively.

A user on r/comfyui discovered why many creators struggle to get high-quality results from the Klein 9B model. The issue stems from incorrectly applying turbo LoRAs or using too many sampling steps, which degrades the output. Klein 9B is designed to be natively fast and performs optimally with only 4 steps without any speed-up modifications. The post includes a downloadable ComfyUI workflow and clarifies licensing terms, stating that while outputs can be used commercially, the model itself requires a commercial license from Black Forest Labs for business use. This finding explains the polarizing reception of the model and provides a clear path to better prompt adherence and speed.

r/comfyui·tutorial·05/07/2026, 01:43 AM·/u/Support_Marmosetthe part nobody warns you about

AI lets you build prototypes at lightning speed, but the resulting technical debt and messy architecture can lead to weeks of painful debugging.

A developer shares a cautionary tale about the hidden costs of rapid AI-assisted development. While the initial prototype was built in just three days, the author spent the following two weeks trapped in a debugging hell caused by AI-generated technical debt. The post highlights issues like 800-line functions, poor naming conventions, and inconsistent state management that agents often introduce. It serves as a reminder that while AI can generate code quickly, the lack of architectural oversight leads to a codebase that feels like inheriting a house from someone who hated you. The author warns that the honeymoon phase of vibe coding is often followed by a grueling, repetitive maintenance phase that is rarely discussed.

r/ClaudeAI·opinion·05/07/2026, 01:05 AM·/u/aerofotoNeed advice on hardware purchasing decision: RTX 5090 vs. M5 Max 128GB for agentic software development

Choosing between Nvidia and Apple for local AI coding: RTX 5090 wins on raw speed for fast iterations, while M5 Max wins on memory capacity for massive codebases.

This discussion evaluates the trade-offs between the RTX 5090 and M5 Max (128GB) for local agentic software development using models like Qwen 3.6 27B. The RTX 5090 provides approximately 3x faster token generation, which is vital for rapid code iteration, but its 32GB VRAM limits context windows and quantization levels (Q4/Q5). Conversely, the M5 Max's 128GB of unified memory supports massive context and higher precision models, though at significantly lower speeds. The author considers a multi-agent setup where a high-level orchestrator manages faster sub-agents for codebase exploration. Technical factors like Multi-Token Prediction (MTP) and MLX optimizations are highlighted as potential game-changers for Apple Silicon's usability in agentic workflows.

r/LocalLLaMA·tooling·05/07/2026, 12:34 AM·/u/BawbbySmith

The Ballad of Broncosaurus

A high-quality example of AI-driven narrative storytelling, blending western aesthetics with prehistoric themes through multimodal generation.

The Ballad of Broncosaurus is a creative AI-generated music video shared on the r/aivideo subreddit that blends western aesthetics with prehistoric themes. The project demonstrates the current capabilities of multimodal AI storytelling by combining high-fidelity generative video with a thematic AI-composed soundtrack. While the specific tech stack is not disclosed by the author, the visual consistency and temporal stability suggest the use of advanced motion models like Runway Gen-3 or Kling. This piece serves as a benchmark for how individual creators can execute complex narrative concepts without a traditional production crew. It highlights the shift from simple prompt-to-video clips to structured, multi-scene narrative works.

r/aivideo·creative_work·05/07/2026, 12:24 AM·/u/HeadOpen4823

Clippy Reloaded - a really sarky useful Clipboard node with no click.

Streamline your ComfyUI workflow with a new clipboard node that automatically copies data without manual clicks.

Clippy Reloaded is a new custom node for ComfyUI designed to simplify data handling by automatically sending outputs to the system clipboard. Unlike standard clipboard nodes that require manual interaction, this version focuses on a "no-click" experience, triggering whenever a value passes through it. It features a humorous, sarcastic interface reminiscent of the classic Microsoft Office assistant. This tool is particularly useful for creators who frequently move prompts, seeds, or hex codes between ComfyUI and other applications. The node aims to reduce friction in repetitive creative tasks within the node-based environment.

r/comfyui·tooling·05/07/2026, 12:13 AM·/u/shootthesound

Clippy Reloaded - a really sarky useful Clipboard node with no click.

Automatically import your system clipboard into ComfyUI workflows every time you queue a prompt, eliminating manual pasting.

Clippy Reloaded is a custom node for ComfyUI designed to streamline the process of getting text into your workflows. Instead of manually pasting text into a node, this tool automatically pulls whatever is currently in your system clipboard the moment you queue a prompt. This is particularly useful for users who frequently copy prompts, descriptions, or parameters from external websites or LLM chats. The node eliminates repetitive clicking and pasting, acting as a dynamic input source. It is available as an open-source repository on GitHub for easy integration into existing ComfyUI setups.

r/StableDiffusion·tooling·05/07/2026, 12:11 AM·/u/shootthesoundThree browser games built with Claude (25M plays). Two of them are 8,000-line HTML files.

You can build a viral, revenue-generating web business with zero prior coding experience by using Claude/Cursor, provided you focus on shipping and iteration.

A non-developer shared a case study of building three viral browser games (dialed.gg) using Claude and Cursor, reaching 25 million total plays and 200,000 daily active users. The first two games were massive 8,000-line single HTML files, proving that functional, complex apps can be built without following traditional software architecture. The project now generates low five-figure monthly revenue from ads, with operational costs around $3,500/month for AI and hosting. A key lesson is that Claude will not proactively refactor code; the user eventually had to migrate to Next.js and TypeScript to scale. This case study highlights the power of 'vibe coding' for rapid prototyping and monetization.

r/ClaudeAI·creative_work·05/07/2026, 12:09 AM·/u/gteehan

Pandora’s Box | A Greek Mythology AI Short Film

See how current AI video tools can be used to create a visually consistent narrative short film with high production value.

This AI-generated short film reimagines the myth of Pandora's Box through a series of highly detailed cinematic sequences. The creator utilizes advanced video generation models to achieve impressive visual consistency across different shots of characters and environments. It represents a growing trend in the AI video community of moving beyond random clips toward structured, narrative-driven storytelling. The aesthetic leans heavily into epic, dark fantasy visuals with high-fidelity textures and dramatic lighting. While the specific technical stack is not listed, the output highlights significant improvements in temporal stability and character rendering in generative tools.

r/aivideo·creative_work·05/07/2026, 12:06 AM·/u/Outside-Objective828

What If Ancient Japan Was Built in Deep Space | 4K Cinematic Journey

Explore a high-fidelity visual concept blending feudal Japanese aesthetics with sci-fi, showcasing the latest capabilities in AI-driven cinematic world-building.

This creative project, shared on the r/aivideo subreddit, presents a 4K cinematic exploration of a 'Space Japan' concept. The video utilizes advanced AI video generation tools to blend traditional architectural elements, like pagodas and torii gates, with futuristic deep-space environments. It serves as a benchmark for how far AI has come in maintaining stylistic consistency across complex, imaginative prompts. The creator focuses on high-resolution textures and atmospheric lighting to achieve a professional film look. While the specific tools used aren't detailed, the quality suggests the use of top-tier models like Sora or Kling. This work highlights the potential for solo creators to produce high-concept visual narratives without a massive VFX budget.

r/aivideo·creative_work·05/06/2026, 11:45 PM·/u/PenguinBWGet faster qwen 3.6 27b

Achieve 50 t/s on Qwen 3.6 27B with 100k context on a single RTX 3090 by using MTP GGUFs and a specific llama.cpp branch.

A user on r/LocalLLaMA shared a method to significantly boost inference speeds for the Qwen 3.6 27B model on consumer hardware. By utilizing Multi-Token Prediction (MTP) GGUF files and a specific pull request for llama.cpp, they achieved speeds of 50 tokens per second on an RTX 3090. The setup involves using Q4_K_M quantization for the model and Q4_0 for the K/V cache to fit a 100k context within 19GB of VRAM. The post includes a step-by-step guide for applying the PR and the exact server configuration flags needed. It also mentions a Mac-specific installation via Homebrew for similar performance gains.

r/LocalLLaMA·tooling·05/06/2026, 11:33 PM·/u/admajic

Expertly Kissed

A showcase of AI video's growing ability to handle complex human interactions like kissing without the typical 'melting face' artifacts.

This Reddit post showcases a high-fidelity AI-generated video focusing on a complex human interaction: kissing. Historically, AI video models have struggled with the physical contact and fluid dynamics of two faces merging, often resulting in visual artifacts or 'melting' effects. The video demonstrates significant progress in temporal consistency and realistic skin deformation. While the specific model used isn't explicitly named in the title, the quality suggests the use of latest-generation tools like Luma Dream Machine or Kling. This serves as a benchmark for how far video synthesis has come in handling intimate human movements.

r/aivideo·creative_work·05/06/2026, 11:18 PM·/u/theJunkyardGold

Ernie Image Lora training - my take

Practical insights and visual benchmarks for training LoRAs on the Ernie model, highlighting necessary adjustments to standard training workflows.

The author presents their findings and visual results from training a LoRA on the Ernie image model, a less common alternative to the Stable Diffusion ecosystem. The post includes specific technical insights into the training process, highlighting how hyperparameters like learning rate and rank need adjustment compared to standard SDXL workflows. Visual benchmarks provided via Imgur demonstrate the model's proficiency in handling complex architectural details and specific artistic styles. This contribution is particularly valuable for users looking to diversify their toolkit beyond mainstream models and understand the nuances of cross-architecture fine-tuning. It serves as both a technical guide and a proof-of-concept for the Ernie model's flexibility.

r/StableDiffusion·tutorial·05/06/2026, 10:53 PM·/u/malcolmrey

My Reference Latent Node including Auto Masking and Timesteps per image is out tomorrow

A new ComfyUI node simplifies character consistency with built-in auto-masking and granular timestep control for reference images.

A new custom node for ComfyUI, developed by /u/shootthesound, introduces advanced Reference Latent capabilities for image generation. The node stands out by integrating auto-masking directly, reducing the need for manual mask preparation or external nodes. It also allows users to define specific timesteps for each reference image, providing much finer control over how much influence a reference has during the diffusion process. This is particularly useful for maintaining character consistency or transferring specific styles without overriding the entire generation. The release represents a streamlined approach to complex multi-image conditioning workflows that previously required cumbersome setups.

r/comfyui·tooling·05/06/2026, 10:32 PM·/u/shootthesound

My Reference Latent Node including Auto Masking and Timesteps per image is out tomorrow

A new ComfyUI node that offers precise control over reference images through auto-masking and per-image timestep scheduling.

Developer /u/shootthesound has released ReferenceLatentPlus, a new custom node for ComfyUI designed to refine how reference images influence generations. The tool introduces auto-masking capabilities and allows users to set specific timesteps for each reference image, providing granular control over when and how much a source image affects the output. It includes integrated VAE input and maximum resolution controls, simplifying the pipeline for piping multiple images directly into a workflow. This release addresses the need for more precise element extraction from source material without complex manual masking. The node is now publicly available on GitHub for integration into existing Stable Diffusion setups.

r/StableDiffusion·tooling·05/06/2026, 10:31 PM·/u/shootthesound

[WIP] ComfyUI Powered Klein 2 KV Edit i2i plugin (Chromium)

A browser sidebar plugin that lets you perform advanced image-to-image edits via ComfyUI using the Klein 2 KV model architecture.

Developer /u/deadsoulinside has released a Work-In-Progress (WIP) Chromium extension that integrates ComfyUI directly into the browser sidebar. The tool focuses on image-to-image (i2i) workflows using the Klein 2 KV architecture, which offers high prompt-based control over image manipulation. Users can create, save, and categorize custom prompts within the plugin's interface. To function, it requires a local ComfyUI instance with API mode and CORS enabled, specifically targeting the Flux-2-Klein 9B model and Qwen 3 text encoders. The project is open-source, serving as a template for others to build upon or port to Firefox.

r/StableDiffusion·tooling·05/06/2026, 10:12 PM·/u/deadsoulinsideUploaded Unsloth Qwen3.6-35B-A3B UD XL models with MTP grafted, here are the results

MTP (Multi-Token Prediction) can significantly speed up local LLM inference, but its effectiveness varies greatly depending on the model architecture and hardware setup.

User /u/havenoammo released GGUF versions of the Qwen3.6-35B-A3B model featuring 'grafted' Multi-Token Prediction (MTP) layers. While MTP previously showed 2-2.5x speedups on dense models like the 27B variant, results for this MoE (Mixture of Experts) version are more modest, ranging from a 6% to 50% increase in tokens per second. The performance seems highly dependent on the specific GPU configuration and quantization level (Q4 vs Q8). The release includes the isolated MTP layers and conversion scripts on HuggingFace, allowing the community to experiment with speculative decoding. These preliminary results suggest that MoE architectures might not benefit as uniformly from MTP as dense models do in current llama.cpp implementations.

r/LocalLLaMA·tooling·05/06/2026, 09:51 PM·/u/havenoammoKijai LTX 2.3 WIth 12 GB of VRam demo reel

You can now run the high-quality LTX 2.3 22B video model on a standard 12GB VRAM GPU using GGUF quantization and specialized ComfyUI workflows.

A user demonstrated that the LTX 2.3 22B video generation model can produce high-quality 8-second clips on consumer-grade hardware. By utilizing GGUF quantization and specific ComfyUI workflows developed by Kijai, the model fits within 12GB of VRAM, specifically tested on an RTX 3060 with 32GB of system RAM. This is a significant milestone as it brings state-of-the-art open-weight video generation to hobbyist setups. The shared resources include the GGUF model files and optimized workflows available on Civitai. This setup balances performance and accessibility, making long-form AI video generation more feasible for local execution without requiring enterprise-grade hardware.

r/comfyui·tooling·05/06/2026, 09:09 PM·/u/OfficeMagic1Acestep 1.5 XL Base Workflow?

Get the ComfyUI workflows for ACE-Step 1.5XL text-to-music generation, though be aware of potential vocal quality issues in the latest base version.

A user on r/comfyui has shared direct links to workflows for ACE-Step 1.5XL Base and ACE-Step 1.5 (4b LLM), which are models designed for text-to-music generation. While these workflows allow for integrated audio creation within ComfyUI, the author notes a significant drop in vocal quality in the 1.5XL version compared to the older 4b LLM variant. The issue persists across various prompts and default settings, resulting in audio that sounds low-bitrate or 'off'. This post serves as both a resource for those wanting to experiment with AI music and a warning about current technical limitations. It highlights the ongoing challenges in maintaining audio fidelity when scaling these specific generative models.

r/comfyui·tooling·05/06/2026, 08:48 PM·/u/uhf789Great results with Qwen3.6-35B-A3B-UD-Q5_K_XL + VS Code and Copilot

A complete, reproducible configuration for running Qwen 3.6-35B locally in VS Code, achieving ~100 t/s for high-quality coding tasks on consumer hardware.

A user on r/LocalLLaMA shared a highly successful local coding setup using the Qwen 3.6-35B model (MoE architecture) via llama.cpp on an AMD R9700 GPU. The post includes the exact startup command for the Vulkan server, a VS Code chatLanguageModels.json configuration, and a complex React/TypeScript prompt that generated a fully functional website. Performance metrics show generation speeds of ~100 tokens/second, though large 38k token prompts cause a 17-second prefill delay. The setup utilizes context checkpointing and flash attention to maintain efficiency. This serves as a practical blueprint for developers looking to replace paid coding assistants with local LLMs.

r/LocalLLaMA·tooling·05/06/2026, 08:47 PM·/u/supracodeHas anyone tried Zyphra 1 - 8B MoE?

Zyphra released ZAYA1-8B, a reasoning MoE that uses less than 1B active parameters to deliver high-end math and logic performance on local hardware.

Zyphra has announced the release of ZAYA1-8B, a new Mixture of Experts (MoE) model focused on reasoning and intelligence density. Despite having 8 billion total parameters, it utilizes fewer than 1 billion active parameters during inference, making it exceptionally efficient for local deployment. The developers claim it outperforms much larger open-weight models in mathematics and logic benchmarks. Notably, the model was trained using AMD hardware and leverages test-time compute to narrow the gap with frontier models like DeepSeek-V3.2. This release highlights a trend toward hyper-efficient, specialized reasoning models that prioritize logic over raw parameter count.

r/LocalLLaMA·model_release·05/06/2026, 08:39 PM·/u/appakaradiUniReasoner: Using LLMs as "Universal Reasoners" to Fix Prompt Alignment

UniReasoner improves image accuracy by letting an LLM critique its own visual draft before the final diffusion step.

UniReasoner is a new framework designed to solve the "understanding-generation gap" in text-to-image models. It leverages the fact that multimodal LLMs are better at verifying images than generating them from scratch. The system uses a three-stage pipeline where an LLM first creates a coarse visual draft using discrete tokens. It then performs a "grounded evaluation" to identify errors like incorrect object counts or missing elements. Finally, a diffusion model such as SANA uses the original prompt, the draft, and the critique to produce a highly accurate final image. This method moves beyond simple prompt rewriting by using SigLIP-based discretization for spatial reasoning.

r/StableDiffusion·model_release·05/06/2026, 08:39 PM·/u/Formal_Drop526

Age of Automata - Trailer for my Steampunk Series

A high-quality example of AI-driven world-building and cinematic storytelling in the steampunk genre, showcasing impressive visual consistency.

This Reddit post showcases a cinematic trailer for an AI-generated series titled 'Age of Automata.' The creator utilizes advanced generative video models to craft a cohesive steampunk aesthetic, featuring intricate mechanical designs and atmospheric environments. The project demonstrates the current capability of AI to maintain visual consistency across multiple shots, which remains a significant challenge in AI filmmaking. While the specific tools used are not explicitly detailed in the post, the visual fidelity suggests the use of high-end platforms like Runway Gen-3 or Luma Dream Machine. It serves as a benchmark for hobbyists looking to move from isolated clips to structured narrative content.

r/aivideo·creative_work·05/06/2026, 08:37 PM·/u/AdComfortable5161

The GB10 Solution Atlas is now open source, the inference engine made for the community with breakneck inference speeds (Qwen3.6-35B-FP8 100+ tok/s)

Atlas is a high-performance, Rust-based open-source inference engine that delivers 3x faster speeds than vLLM on Blackwell hardware by removing Python overhead.

Atlas is a newly open-sourced inference engine written in pure Rust and CUDA, designed to bypass the performance bottlenecks of the standard Python/PyTorch stack. Optimized for NVIDIA Blackwell (GB10) architecture, it achieves over 100 tokens per second on Qwen3.5-35B models using NVFP4 precision and Multi-Token Prediction (MTP). The engine features a lightweight 2.5GB Docker image with sub-2-minute cold starts and provides native support for OpenAI and Anthropic API formats. By rewriting the stack from HTTP handlers to kernel dispatch, the developers claim a 3x throughput increase over vLLM. Future updates aim to bring these optimizations to AMD Strix Halo and RTX 6000 Blackwell hardware.

r/LocalLLaMA·tooling·05/06/2026, 08:36 PM·/u/Live-Possession-6726

LTX 2.3 is pretty much all I use for video gen at this point -- Scene from my current story-driven fantasy project -- Info on process/workflow in comments.

LTX 2.3 is emerging as a top-tier choice for consistent, story-driven AI video, with practical workflows now available for independent creators.

A creator showcases a high-quality fantasy scene generated using LTX 2.3, a video generation model from Lightricks. The post highlights the model's capability for narrative-driven projects, with the author claiming it has become their primary tool for video production. Unlike typical AI video demos, this project focuses on temporal consistency and story-driven aesthetics rather than just visual spectacle. The author provides specific workflow details in the comments, offering insights into how to achieve professional-grade results. This indicates a growing maturity in open or accessible video models for independent creators.

r/StableDiffusion·creative_work·05/06/2026, 08:33 PM·/u/foxditMost people seem obsessed with token generation speed, but isn’t prefill the real bottleneck? Am I missing something?

For agentic workflows and large contexts, prefill speed (how fast the model 'reads' the prompt) is a bigger bottleneck than generation speed.

A technical discussion on r/LocalLLaMA highlights that while benchmarks prioritize generation speed (tokens/s), the prefill stage is the actual bottleneck for many advanced users. Prefill is the initial phase where the model processes the input prompt before generating the first token. For agentic workflows involving large codebases or long RAG contexts, waiting for the model to 'ingest' data takes significantly longer than reading the output. The author notes that even 15 t/s generation is acceptable, but slow prefill (e.g., 300 t/s on a Qwen 27B) creates noticeable lag. This suggests that hardware and software optimizations should prioritize prompt processing for professional, high-context use cases.

r/LocalLLaMA·opinion·05/06/2026, 08:02 PM·/u/wbulotIs there any interest for a Character dataset evaluation script ?

A new tool is being developed to help curate LoRA training datasets by detecting face mirroring and scoring image quality and variety.

A Reddit user has developed a Python script with a Gradio interface designed to optimize datasets for training LoRAs of real people. The tool addresses two specific problems: detecting mirrored faces to prevent unnaturally symmetrical results and providing a relevancy score based on image quality and variety. By filtering out redundant or low-quality images, the script aims to improve the final model's fidelity. While currently in the feedback stage, the author is gauging community interest before a public release. This could be a valuable utility for hobbyists struggling with manual dataset curation.

r/StableDiffusion·tooling·05/06/2026, 07:54 PM·/u/HumbleSousVideGeekExaggerated PCI-E bandwidth concerns?

PCIe bandwidth concerns for multi-GPU setups are likely exaggerated; even a 4.0 x4 link handles high-speed prefill for mid-range cards using vLLM and Tensor Parallelism.

A user on r/LocalLLaMA conducted benchmarks to test if PCIe bandwidth is a true bottleneck for multi-GPU local LLM setups on consumer hardware. Using two RTX 5060 Ti 16GB cards with vLLM and Tensor Parallelism (TP=2), they found that peak bandwidth during prefill reached only 3-4 GB/s. This represents about 50% of the capacity of a PCIe 4.0 x4 slot, suggesting that even limited chipset-connected slots are sufficient for mid-range cards. The test involved high-speed quants like NVFP4, achieving prefill rates up to 1700 t/s. These findings suggest hobbyists can scale to 3 or 4 GPUs using M.2 adapters without needing expensive workstation-grade motherboards.

r/LocalLLaMA·news·05/06/2026, 07:54 PM·/u/ziphnor

ZAYA1-8B: Frontier intelligence density, trained on AMD

ZAYA1-8B is a new 8B model that claims to outperform Llama 3.1 8B, proving that high-density intelligence can be achieved using AMD-based training stacks.

Zyphra has released ZAYA1-8B, a new language model designed to maximize intelligence density within the 8-billion parameter class. The model reportedly outperforms Llama 3.1 8B and Gemma 2 9B across several key benchmarks, including MMLU and GSM8K. Notably, ZAYA1-8B was trained entirely on AMD Instinct MI300X accelerators, showcasing a viable alternative to the NVIDIA-dominated training ecosystem. This release targets developers looking for high-performance models that can run efficiently on consumer hardware or edge devices. The architecture focuses on better data efficiency and architectural refinements to squeeze more reasoning capability out of fewer parameters.

r/LocalLLaMA·model_release·05/06/2026, 07:43 PM·/u/carbocation

Making a full length fantasy movie ( Magehold )

A showcase of how AI video tools are maturing from short experimental clips into full-length, consistent narrative filmmaking.

Independent creator MosskeepForest has unveiled 'Magehold', an ambitious project aiming to produce a full-length fantasy movie using AI video generation tools. The project demonstrates the current state of the art in maintaining visual consistency across multiple scenes, a significant challenge for generative video. It features high-fidelity character designs and expansive environmental storytelling, moving beyond the typical 5-second clips seen on social media. This effort represents a growing trend of 'solo-studio' productions where AI handles the heavy lifting of visual effects and cinematography. The release serves as a benchmark for how hobbyists can leverage current LLM and video models to build complex, long-form narratives.

r/aivideo·creative_work·05/06/2026, 07:36 PM·/u/MosskeepForest

Anyone else tried this RefineAnything LoRA? Pretty impressed so far

A new ComfyUI plugin and LoRA workflow for surgical image refinement, perfect for fixing text, logos, and small details without affecting the rest of the image.

The RefineAnything project provides a specialized LoRA and workflow for surgical image repairs, specifically targeting text, logos, and product labels. A new ComfyUI plugin, ComfyUI-RefineNode, has been released to automate the manual labor of mask preparation, reference alignment, and pasting back the refined region. The plugin is model-agnostic, meaning it can enhance any local detail repair workflow, not just the RefineAnything LoRA. It supports both scribble masks and bounding boxes, ensuring the rest of the image remains 100% untouched. A technical tip from the developer suggests avoiding the 'index_timestep_zero' method to prevent noticeable color shifts during the process.

r/StableDiffusion·tooling·05/06/2026, 07:32 PM·/u/liangkun43

Google's Design.md is a design team in a file

Use .md files to store your design system's DNA (typography, colors, motion) and attach them to AI agent prompts to ensure consistent, high-end aesthetics across your entire app.

Greg Isenberg and designer Meng To discuss 'design.md,' a workflow that uses structured Markdown files to define a project's visual DNA for AI agents. By providing specific instructions on typography, spacing, and motion in an .md file, builders can prevent 'design drift'—the tendency for AI-generated UI to become generic after the initial prompt. The method allows non-designers to maintain consistency across different platforms like Lovable, Cursor, and v0. Meng To emphasizes that while 'vibe-coding' is popular, professional results require a 'design memory' that the AI can reference. This approach bridges the gap between high-level creative vision and the technical execution of AI-assisted development.

Greg Isenberg·tooling·05/06/2026, 07:13 PM·Greg Isenberg▶Watch here

OpenAI built a networking protocol with AMD, Broadcom, Intel, Microsoft, and NVIDIA to fix AI supercomputer bottlenecks

OpenAI and tech giants released MRC, an open-source protocol that makes training massive models faster and cheaper by optimizing how 100,000+ GPUs communicate.

OpenAI, in collaboration with industry leaders like NVIDIA, Microsoft, and AMD, has introduced MRC (Multi-Path Remote Communication), an open-source networking protocol designed for AI supercomputing. The protocol addresses the massive data bottlenecks inherent in training LLMs across tens of thousands of GPUs. By enabling data transmission across hundreds of paths simultaneously, MRC reduces the required network switch layers from four down to just two. This architecture supports clusters of over 100,000 GPUs while significantly lowering power consumption and hardware costs. Currently, the protocol is operational within OpenAI's Stargate supercomputer project, signaling a shift towards more efficient, standardized AI infrastructure.

The Decoder·tooling·05/06/2026, 07:13 PM·Matthias BastianvLLM V0 to V1: Correctness Before Corrections in RL

vLLM V1 is a major upgrade optimized for RL and reasoning models, focusing on output correctness and significantly better inference performance.

vLLM is transitioning from V0 to V1, marking a major architectural overhaul focused on Reinforcement Learning (RL) workflows. The update emphasizes a 'Correctness Before Corrections' philosophy, addressing the critical need for high-fidelity outputs in complex reasoning tasks. This shift is particularly relevant for serving modern models like DeepSeek-R1 that rely on long-chain reasoning and RL-based optimization. The new version aims to significantly reduce overhead and improve throughput while maintaining strict output validation. It represents a move towards more robust, production-ready inference for the next generation of agentic and reasoning LLMs.

Hugging Face Blog·tooling·05/06/2026, 07:06 PM

Anthropic taps SpaceX's Colossus-1 data center for 220,000 GPUs to power Claude

Anthropic is scaling up massively by leasing SpaceX's Colossus-1 data center, which will double Claude Code rate limits and boost API capacity for Opus models.

Anthropic is taking over the full computing capacity of SpaceX's Colossus-1 data center, utilizing over 220,000 NVIDIA GPUs and 300 megawatts of power. The facility is expected to be operational within a month, providing a massive boost to Anthropic's training and inference capabilities. Consequently, the company is doubling rate limits for Claude Code and increasing API limits for its high-end Opus models. This scale of infrastructure suggests that Anthropic is gearing up for the release of significantly more powerful frontier models. The partnership highlights the intensifying competition for massive-scale compute resources in the AI industry.

The Decoder·news·05/06/2026, 06:42 PM·Matthias Bastian

[Z-Image] REALSTAGRAM_ZIMG — subtle realism LoRA for Z-Image Turbo (works with any character LoRA)

Enhance Z-Image Turbo generations with a subtle, candid Instagram realism LoRA that stacks perfectly with character models.